Sometimes we need some data on the web and perferable in time series. For this post, we will get the weather report every 15 minutes.

This full automation requires 3 applications (Task scheduler, Excel and python.exe), a Python script and a batch file. This is a full total automation, which means you just need to power on your computer. My earlier post on Running a Python script using a batch file is semi-automation. But of course we can make it more automated by adding a loop and a timer inside the Python script, but I prefer using the task scheduler as you can run concurrent task fully automated.

The below Python script get the necessary data from the website, using Selenium and webdriver to crawl into the web’s HTML. It is written using Notepad and saved with .py extension. The code is originally written and test run on VS Code. The code also put the extracted data into the respective cells in the Excel workbook.

from urllib.request import urlopen

from urllib.error import HTTPError

from bs4 import BeautifulSoup

#import json

import re

from selenium import webdriver

import time

import datetime

import csv

from openpyxl import load_workbook

try:

html=urlopen("........website url")

except HTTPError in e:

print ("Error in http address. Please check if address is correct or available.")

else:

BsObj=BeautifulSoup(html)

print("ok")

BsObj_1=BsObj.find("div",{"class":"col-xs-12 col-sm-4 col-md-4"})

BsObj_2=BsObj_1.find("div",{"class":"title"}).h2

print(BsObj_2.get_text())

#print(BsObj.find("span", id=re.compile("time")).siblings)

#print(BsObj.select_one("span[id=time]").text)

driver = webdriver.Firefox()

driver.get("........website url")

print(driver.find_element_by_id("time").text)

#data1 = driver.find_element_by_id("time").text

print(driver.find_element_by_id("psi24_range").text)

PsiData = driver.find_element_by_id("psi24_range").text

TempData = driver.find_element_by_id("temperature").text

ConditionData = driver.find_element_by_id("weather_desc").text

SomeOtherData = driver.find_element_by_id("SomeOther").text

#Time delay

time.sleep(60)

driver.close()

Date = datetime.datetime.now().date()

Time = datetime.datetime.now().time()

wb = load_workbook(r"C:\Users\xxxxxxx\xxxxxxxxx\xxxxxxxxxx.xlsx")

sheet = wb['Weather_Data']

MaxRow = sheet.max_row +1

# ***********to fill up the next empty row in the respective columns *************

sheet.cell(column=1, row = sheet.max_row+1, value = Date)

sheet.cell(column=2, row = MaxRow, value = Time)

sheet.cell(column=3, row = MaxRow, value = TempData)

sheet.cell(column=4, row = MaxRow, value = ConditionData)

sheet.cell(column=5, row = MaxRow, value = PsiData)

sheet.cell(column=6, row = MaxRow, value = SomeOtherData)

wb.save(r"C:\Users\xxxxxxx\xxxxxxxxx\xxxxxxxxxx.xlsx")

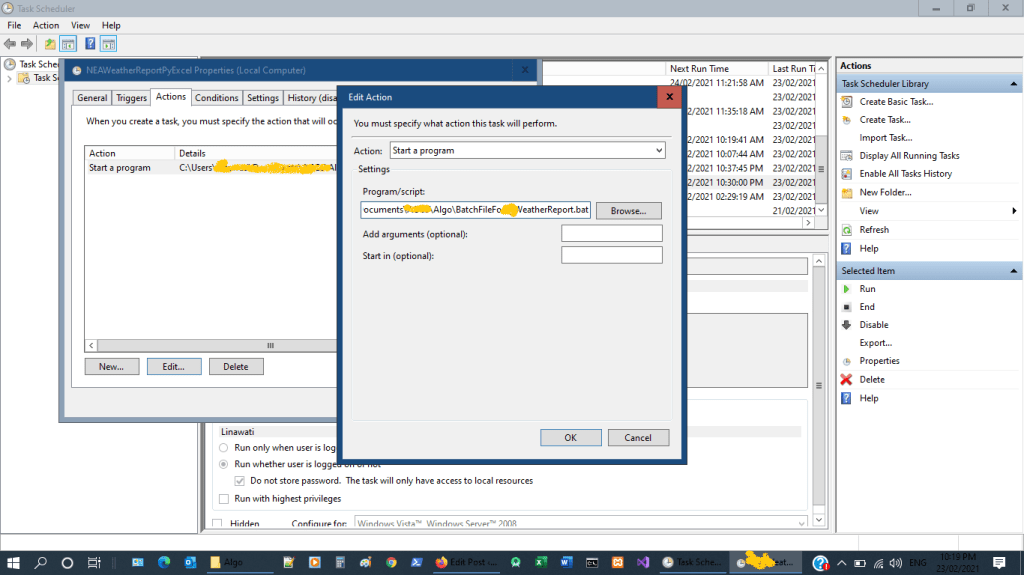

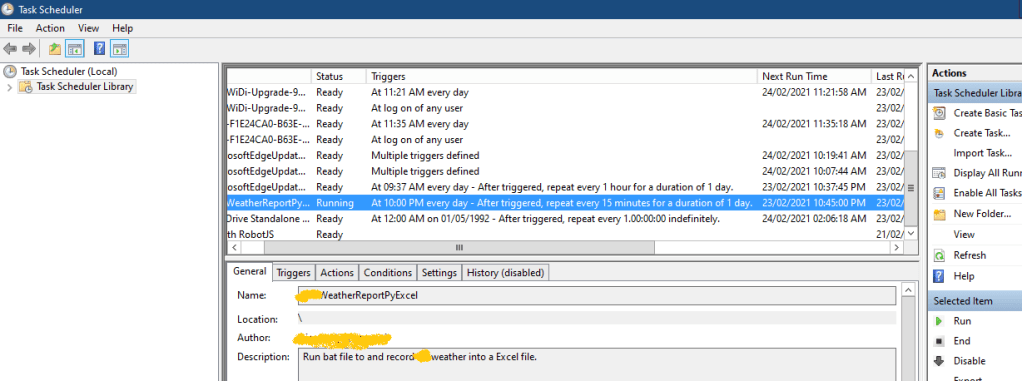

Below is the screen shot of the Task Scheduler. To learn more about setting the task scheduler, you can go to SpreadSheet Guro website (https://www.thespreadsheetguru.com/blog/how-to-automatically-run-excel-vba-macros-daily) . The linked post uses the task scheduler to run a VB script (.vbs). Here we use it to run a batch file (.bat). You can set the start time, stop time, interval from the task scheduler, all you need is to on the computer and log in.

↑ The batch file location is keyed into Actions tab —>Program/script.

So below is the batch file, written using Notepad and saved with .bat extension.

goto :start

You can put your comments here....

:start

@echo off

"C:\Python\Python37-32\python.exe" "C:\Users\Linawati\Documents\ACCS\Algo\WebScraping_NEA.py"

exit

↑ @echo off: the script will not appear in the CMD. Follow by the location of the python.exe and the location of the .py file. ‘exit’ will close the CMD window after running.

When the task is running the status will show “Running”. You have to refresh the window to see the changes.

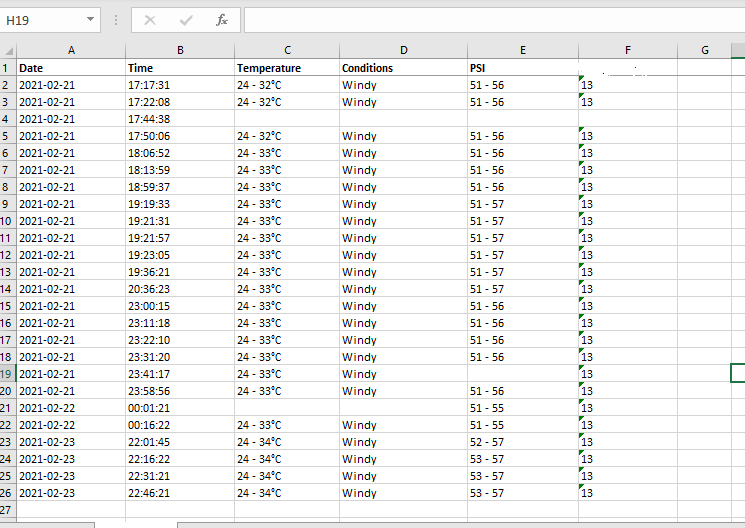

And finally the data that was collected and saved into Excel sheet as shown below ↓ . The last 4 rows are data collected to show for this post. So you can build your own automatic web data logger. The hardest part for me is the Python script, so let’s learn to code and build our own logger. Keep coding, keep learning, keep building.